An OpenClaw example

Agentic AI in Practice

You’ve probably heard of OpenClaw, the AI receiving a great deal of attention over the last two months and earning its developer a $1 Billion buy-out a mere 82 days after he launched the project.

OpenClaw is not my preferred platform for Agentic AI. I find the AgentZero framework more controlled and trustworthy and for non-technical people I recommend the AutoHive service. (AutoHive’s CEO JD Trask was a guest on a recent episode of my SimonTV Podcast.)

Think of OpenClaw as a personal assistant. It’s a bundle of technologies running locally on your own system capable of teaching itself new skills and interfacing with Large Language Models (LLMs) locally and remotely for reasoning. Unlike a chatbot AI interface it is capable of performing tasks such as installing software, writing software and most crucially, executing software.

And that makes OpenClaw dangerous. It is capable of performing destructive tasks and could decide to do so or it could be compromised by someone and instructed to do so. For this reason I keep OpenClaw isolated on separate hardware segregated from my own workstation. OpenClaw knows this, because I told it:

Your name is Claw and you live on a Raspberry Pi model 4 with 8GB of RAM. I consider the Pi your home to manage. My name is Simon and my environments are separate from yours. You can communicate with me via the Telegram platform and we can collaborate by working on files and data in our private Nextcloud instance. Here is the token for your Telegram bot, and here are your credentials to access Nextcloud: <token> <creds>

So far so good. OpenClaw can communicate with me in real time and we can collaborate on files and data in a shared environment. Now to the practical example:

My process for preparing a SimonTV LIVE episode each week is relatively simple. I have a note taking app which synchronises across my devices using Nextcloud as the back end. Throughout the week I update a “Show Notes” note with the topics I think the audience will find interesting and links to reference sources such as news articles. By Sunday I have a list in the Show Notes and simply traverse down through it during the show.

I described this process to OpenClaw and asked how it might improve it. In response it created a file for me to read containing a list of questions. I admit to finding this surprising as it pursued this course of action pretty much on its own initiative, by inference. After I answered the questions it built itself the capability to automate the SimonTV pre-production process for me.

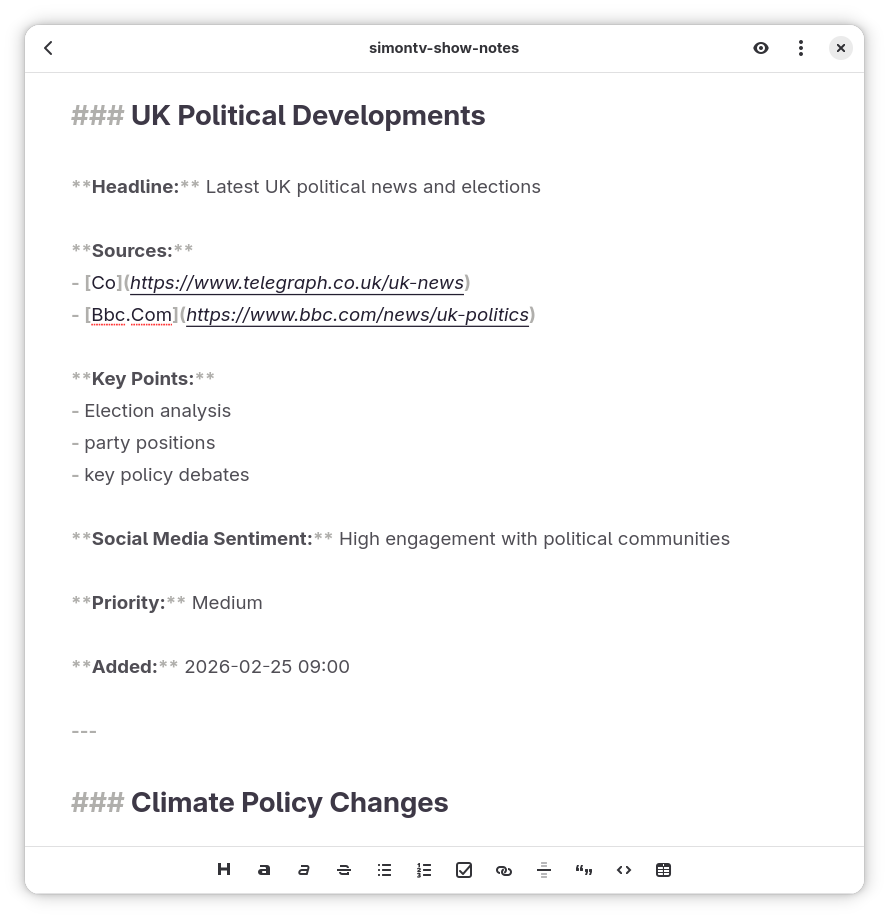

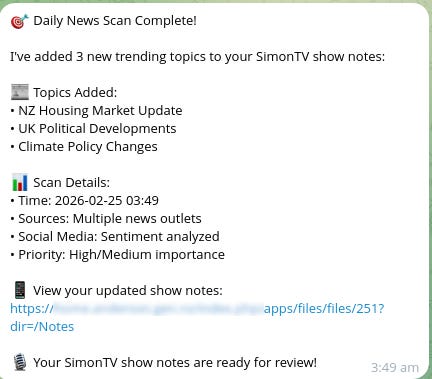

Every day OpenClaw scours the Internet for topics I might be interested in and correlates these against the amount of social media discussion to decide which topics to tell me about. It then provides a bullet point synopsis and links to relevant news articles. It adds these entries to the Show Notes file in Nextcloud. They look like this:

..and sends me a message on Telegram to inform me that it has done so:

OpenClaw also created a section in the Show Notes it calls “Reference Links.” It asked me to add line items to this section for topics I want to discuss that it didn’t choose, so it can go and research those for me. We have agreed that if I remove a topic from the file it will ignore that topic for this week’s show but might come back to it another week.

OpenClaw designed most of this on its first attempt, after seeking clarifications to the questions initially posed. I’ll need to tweak its behaviour over time to customise it to my requirements of course but these are minor alterations: OpenClaw has automated about 80% of the SimonTV pre-production straight off the bat.

Impressed with this I asked OpenClaw if it would be easier for us to communicate verbally. It recommended that a verbal interface would be convenient for minor matters but anything more complicated would be better to do via a text interface for greater accuracy.

We decided to proceed with that approach in mind. I attached a microphone and speaker to OpenClaw’s Raspberry Pi and told it they were there. OpenClaw installed an audio stack, voice recognition libraries and voice synthesis utilities, then asked me to execute a program it had written.

…and started talking to me. And responding to my voice. Five minutes after attaching the hardware we were having a conversation. Impressive.

Dangerously impressive. Anyone within range of the microphone could speak an instruction and OpenClaw would carry it out. Containing an AI Agent like OpenClaw, creating guidelines to limit its behaviour and secure it from malicious compromise, is a difficult undertaking. In my considered opinion it is an incredibly useful piece of software but I could never ever trust it.

And I strongly recommend you don’t either.

-SRA. Auckland, 25/ii 2026.

Great article Simon, I'm starting to move into this space to share my thoughts and learning more about AI as I go. Of course yourself and others like Matua and Eeryk have been truly inspiring to me, not because I agree with all the content you guys produce, but because of your ability to think outside the box and challenge yourselves and others. Thank you.